VFX in games refers to the visual effects seen by players during exploration, or when interacting with game elements. The effects aren’t obvious throughout gameplay, but players feel the difference when there’s ineffective VFX or none at all. Effective VFX makes the player understand the meaning behind their actions, like the power behind an attack or what causes health to drop. This entails adding details like hit sparks during combat or smoke from a fire.

VFX artists are in charge of creating and implementing VFX. Artist intention, game performance and player emotion all need to weave into each other to ensure the VFX doesn’t impede the playthrough and creates an immersive experience. Keep reading for a detailed overview of VFX in games, including different types and artist roles, alongside real game examples. There’s also information on the different software used to create VFX and courses to help both beginner and experienced artists.

What is VFX in video games?

VFX in video games is the visual effects seen and experienced by players during gameplay. VFX in movies is pre-rendered and doesn’t change, so all viewers need to do is watch. VFX in video games happens while interacting with the game, and changes according to player actions, contributing to storytelling, clarity, and immersion.

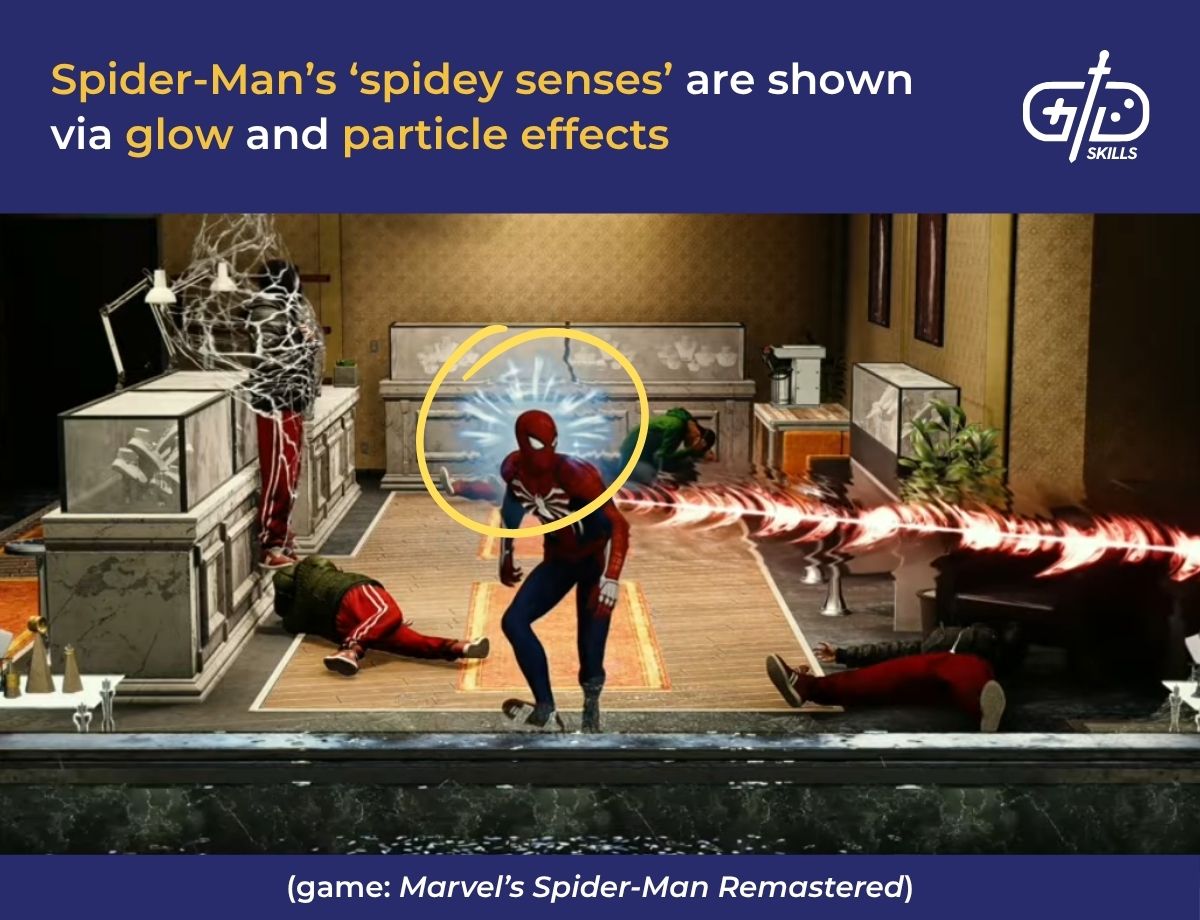

Visual storytelling in a game is when artists blend VFX with narratives to create immersive experiences. The concept follows the principle of show, not tell, like when RPG games use VFX graphics for spells and energy auras. Games with elemental-based magic systems tend to have red auras for flame attacks and blue for water attacks. These details communicate the character’s identity and power to players visually.

Visual storytelling includes environmental storytelling, so VFX animations for dynamic weather, like rain or fog, are designed to be part of the game system. Games use weather to propagate narratives or add tension, which sets the mood for cut scenes and locations. The weather is always dull and cloudy in Stormterror’s Lair from Genshin Impact until the quest there is completed. Throwing in slow, melancholic music makes the mood bleak and depressing, so it fits the lore of the location effectively.

VFX improves the clarity of a game so that players are able to feel the impact behind their actions without having to dive too deep. A fireball in an action game that deals significant damage based on the numbers but generates a small explosion is a confusing mismatch. Designing it so the terrain is affected by its use, and adding in smoke, lets the player feel its power.

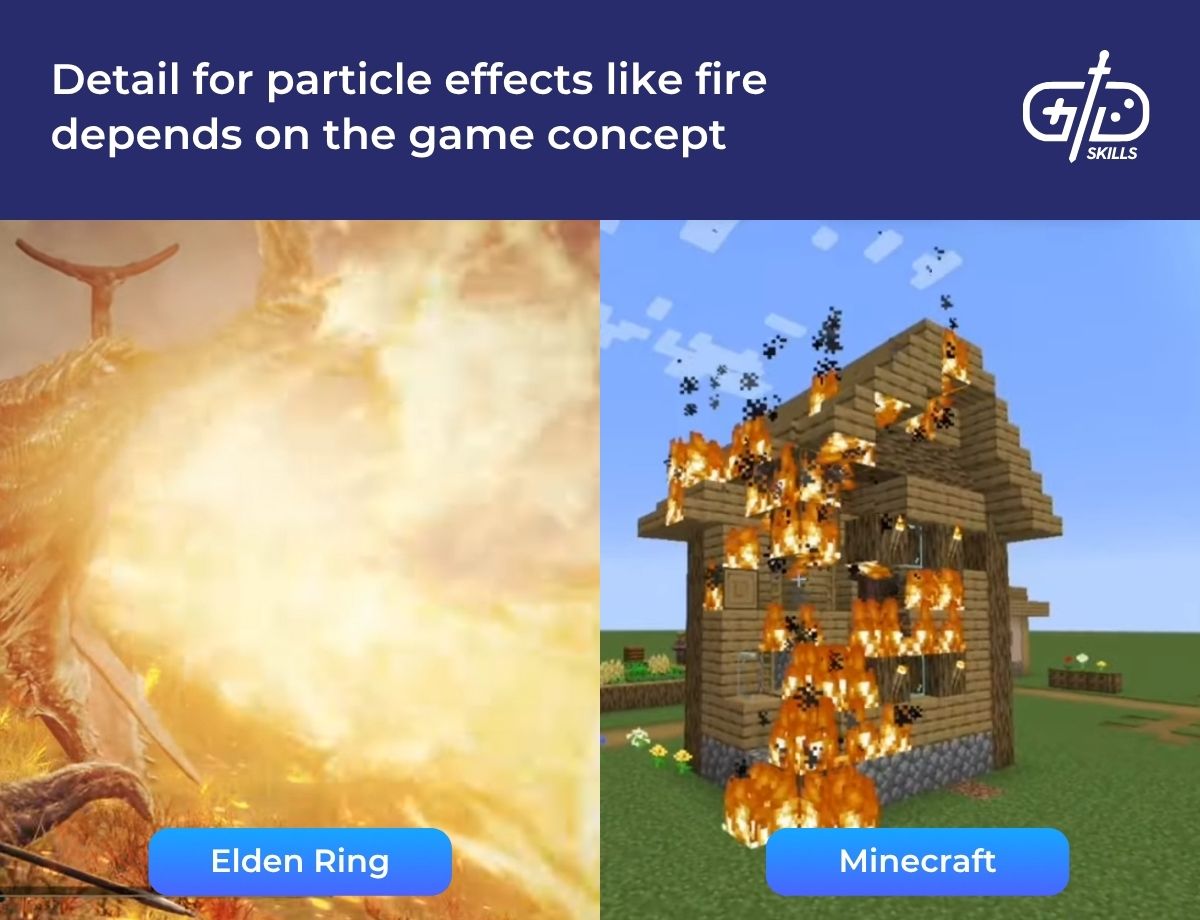

The amount of VFX detail in a game depends on the game type, but the quality of the VFX is kept consistent across genres. VFX detail is how complex and rich the visual effects are, such as their particle density. Games like Minecraft with pixel-type visuals require less detail. Artists use fewer particles for elements like smoke, so the VFX results in square smoke puffs that match the game aesthetic. The quality of the VFX is high when its visual impact is maintained without compromising the game’s optimization or smoothness. Artists apply different levels of detail depending on the game type so that the effects are optimized based on the platform and genre.

Optimization is essential for mobile games because of hardware constraints, so before creating any effects, I discuss my ideas with more devs. I define the limits early to be able to make compromises between quality and efficiency. A mobile game doesn’t need the same amount of detail as a PC game, so there’s a distinct difference in detail between games like Subway Surfers and Call of Duty. We use coded shaders instead of shader graphs for improved performance, for example, and don’t add lighting as it isn’t cheap.

What are the types of VFX in games?

The types of VFX in games include particle effects, character VFX and environmental VFX, which work together to enhance the game world. Post-processing effects, physics-based VFX and shader VFX add depth to movement and the world. Designers use UI VFX to respond to player actions and provide information about what each button does.

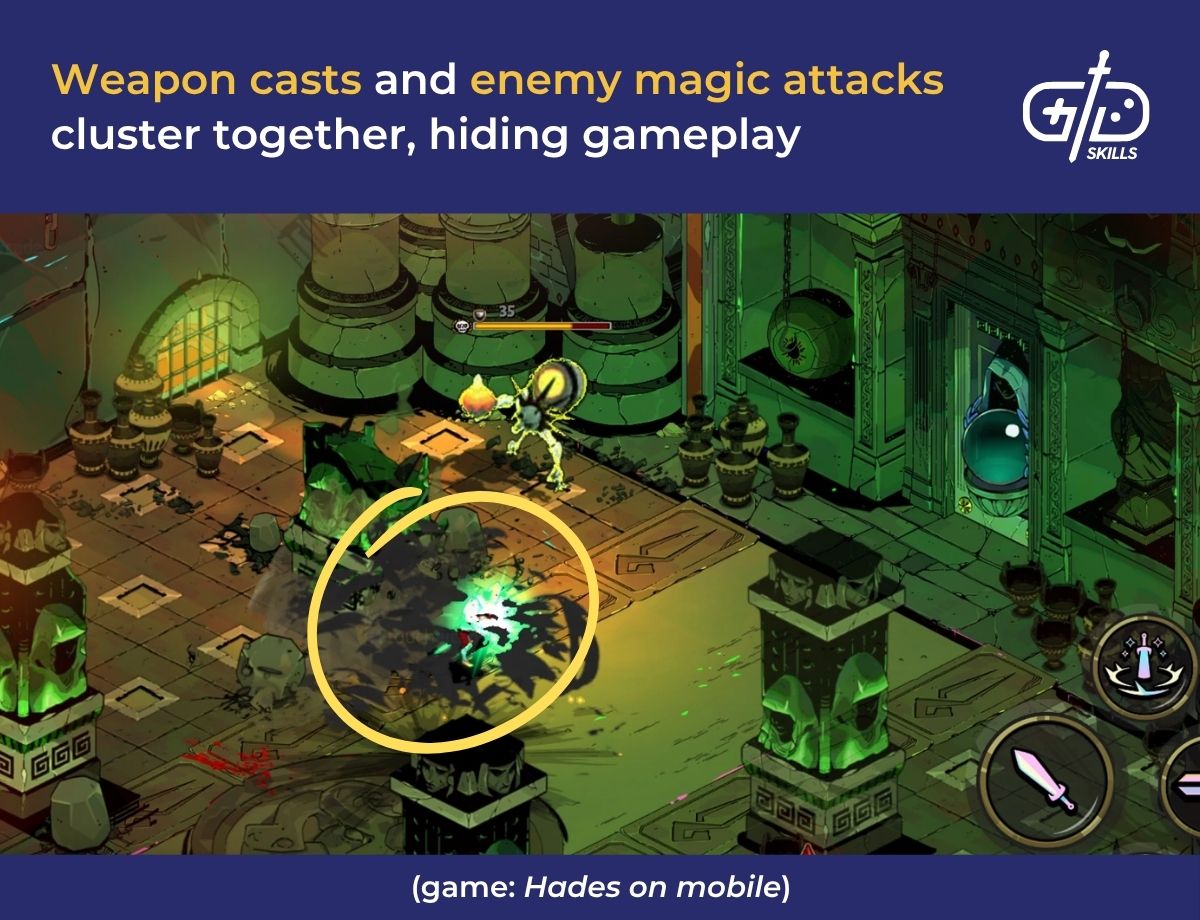

Particle effects are when elements like fire, smoke, magic or dust are simulated within the game environment. It refers to any substance that has visible particles floating around and is useful for action-heavy gameplay. Games with magic systems use colored magic trails to help differentiate between ally and enemy attacks, so the gameplay isn’t frustrating.

Hades on mobile’s mob attacks on higher levels use similar particle effects for magic bursts and weapon trails, making it hard to distinguish between enemy attacks and long range casts. For hardcore players, this presents fun visuals, but for casual enjoyers, gameplay becomes frustrating and demotivating due to low visibility.

An effect I worked on used a mix of particle effects and animation for a beam gun booster that turned nine game objects into the same alien. We made large particles move around and change size in front of each object to hide the transformation. We then shrank the original object with an animation so that when the beam gun’s charge completed, the particles disappeared. The alien then appeared with its own animation to complete the transformation.

Character VFX ties into particle effects as character-specific visuals tend to have elements of smoke or magic. RPG games use character VFX for additional abilities or powers used in combat, which are characterized by unique trails and shapes.

Ziggs from League of Legends showcases how character VFX combines both character traits and damage. His ultimate, Mega Inferno Bomb, relates to his identity as an explosives expert so the resulting blast is large with bursts of flame. The power behind the attack is proved to the player whilst staying true to Ziggs’s identity. There’s also a visible circle of effect that proves how much ground the attack covers.

Environmental VFX is when artists add weather elements like rain, fog, water and lightning to make worlds feel alive and immersive. Designing the weather so it changes based on gameplay mechanics, like rain in specific locations or during in-game timelines, helps make scenes emotional and gives incentives for players to explore and gather.

Stardew Valley makes use of environmental VFX to incentivize gathering during specific weather. The green rain event that incurs at a random point only once during Summer in-game affects the entire game’s visuals. The town becomes an apocalyptic visual landscape, with an overgrowth of moss and fiddlehead ferns, both of which are valuable resources. Players then gather these resources as much as possible, incentivized by the dynamic weather.

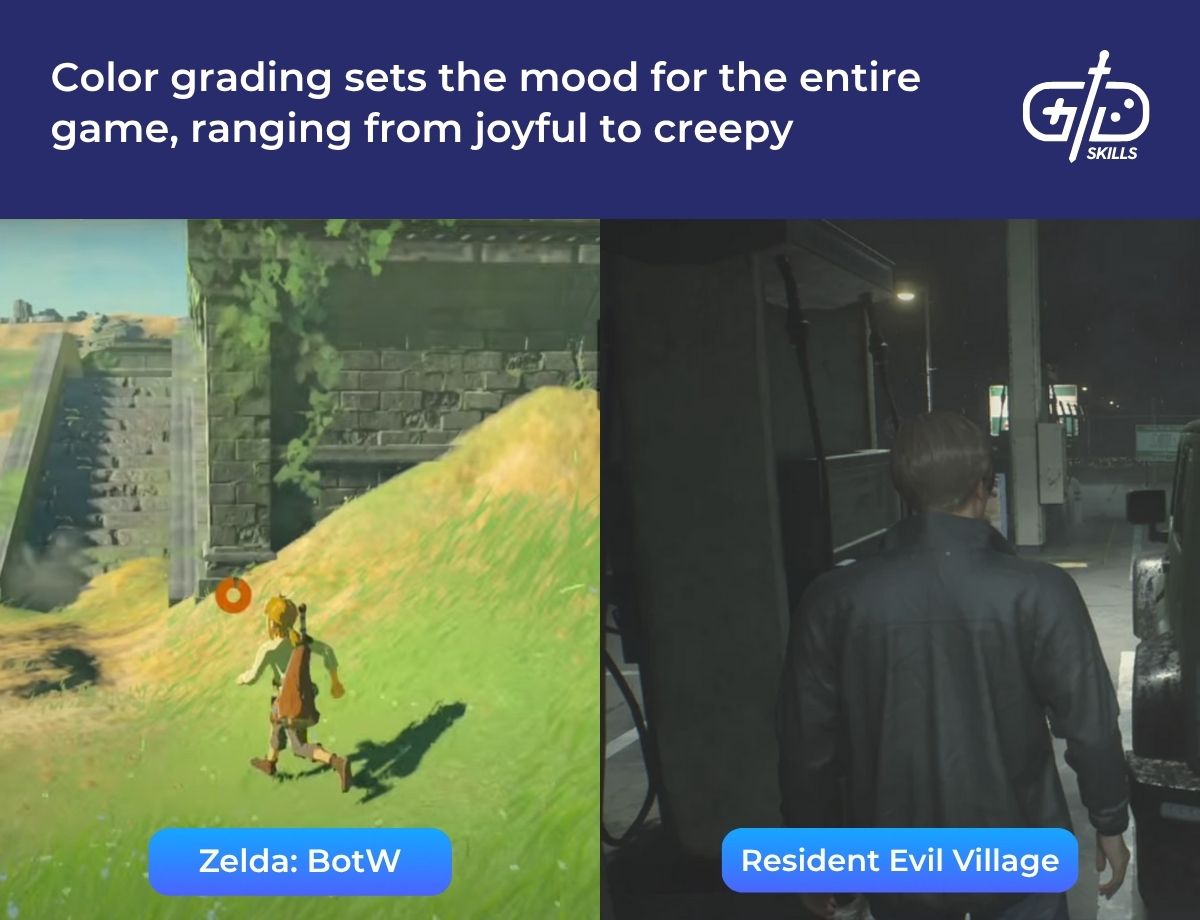

Post-processing is when artists use technical effects like bloom and color grading that improve the overall look and feel of the game. Adding bloom to already bright objects makes their glow spill over the edges in a natural way, similar to the sun in photos. Zelda: BotW uses bloom for sunlight and fireflies as they glow gently against nature landscape.

Color grading puts a filter on the entire game, changing the mood. Colder colors, with dark accents, make settings appear dangerous while warmer, brighter colors put a positive spin on locations. Resident Evil Village uses cold, blueish tones that make it feel creepy which makes players stay on-edge during gameplay.

Physics-based VFX are when the movements of cloth, fluids, and interactive elements are mimicked in the game. Designing a field of grass to move as the character runs through it helps add realism and immersion to gameplay. In combat games, adding flying debris based on gravity and collision force showcases the power behind movements. Battlefield V has similar VFX with explosions that result in debris being tossed around.

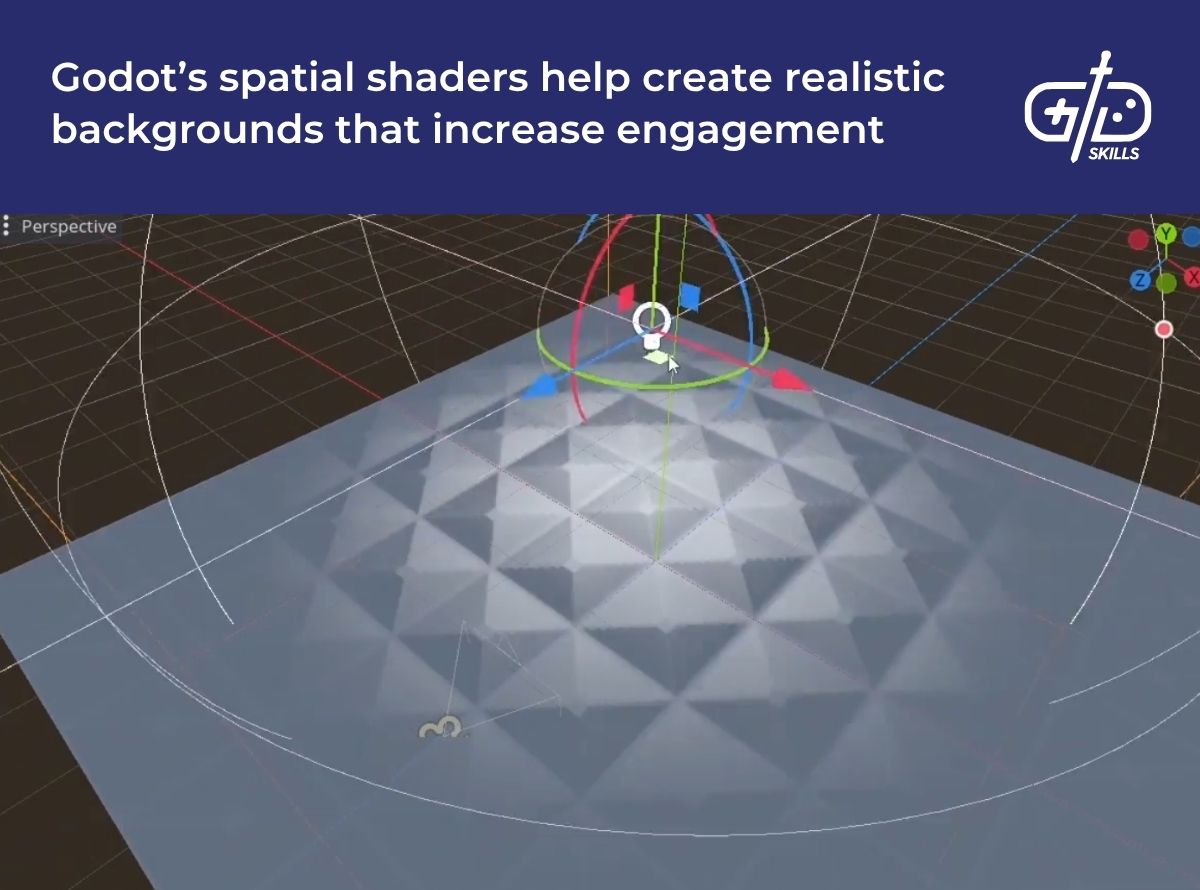

Technical artists create custom shaders for VFX artists to add glow and distortion, giving the game a unique visual style that helps it stand out in the market. Apex Legends uses shaders for its orange barriers by making parts of the surface glow and slightly transparent, standing out during gameplay. There are moving patterns across the barriers as well that make it look like the energy is flowing through it.

2D VFX in 3D games are used for stylized effects as well, following anime or cartoon art styles. Artists place flat images that behave like effects inside a 3D environment, like anime slashes for weapon movements. These kinds of effects stand out visually and match better if the art is already stylized to an extent. Overwatch uses flat 2D animations for hit sparks, but the explosions themselves are 3D.

In fast-paced games like Overwatch, adding 2D VFX against 3D environments optimizes the performance since 2D effects are lighter on the GPU than 3D simulations. Mobile games and indie titles make the most out of this combination since it’s easy for players to read and comparatively expressive next to realistic effects. Adding pixel explosions to a game like The Last of Us won’t have the same effect as in a game like Super Smash Bros.

VFX in indie game development is especially useful as it contributes to immersion, which is increased when VFX is combined with sound and animation. A 2024 study by Jassaui et al. suggests that devs make sure the game runs smoothly while showing off engaging visuals. Playing a game on mobile that has bright colors and effects that stand out ensure the game is both fun and engaging.

UI VFX refers to visual cues that indicate menu pop ups, button presses or health bars. Boons in Hades II are examples of UI VFX; they’re popup rewards for players progressing, with colors corresponding to the Olympian sending them. The Boons are a list of buffs for attack, health, or speed that glow when selected, which makes it clear that they’ve been applied.

Response and feedback effects are additional VFX that both increase clarity. Response effects are immediate visual reactions to player actions, like how Mario Party has mini explosions when characters successfully hit each other. Feedback effects are ongoing visual cues that reflect context and gameplay.

UI VFX supports players as they navigate the game, so combining it with response and feedback effects increases clarity in the game. Health bars, for instance, count as a mixture of feedback effects and animations since the bar changes its appearance based on what happens to the player. Players are then able to figure out what impact their actions have from these effects, which gives them clarity in complex environments, as identified in Fadelli’s 2017 study from the University of California.

I create effects after considering their purpose in the game, instead of going off their nature. Effects that players see frequently, for example, need to be satisfying with clear intent, like gunshot flashes. In the same way, effects that support character identities need to carry the story and be expressive. Effects, overall, are designed around their meaning and purpose within the game so they’re able to give players engaging and clear gameplay.

What does a VFX artist do in the game industry?

A VFX artist in the game industry creates and integrates visual effects that highlight gameplay elements to increase immersion in a game. Artists pay attention to what effects match the game’s overall visual style and concept, and optimize the VFX so it doesn’t affect the game’s performance. They work with both game designers and technical artists to achieve that balance.

Key elements artists create particle effects, lighting and environmental effects. Particle effects include special character skills like Genshin Impact’s elemental attacks. The effects are enhanced with custom sprites, like wind slashes. Anemo Aether’s elemental skill generates a blast of wind that’s made apparent by how the surrounding foliage moves, with glowing wind slashes and particle effects. Artists also work with animators to turn concepts, such as how fabric moves with the character, into real-time effects that sync up with player movements.

I added VFX to the boosters in Sort Express!, which are rewards or purchased items that help players progress. I always consider clarity first in VFX design, so I knew it had to be obvious what the booster does. After that, I made the booster visually appealing so players feel drawn to buy them when they see them in the store. The booster has to showcase its impact, too, so I used glowing effects and explosions to help bring out its usefulness.

Small indie studios or teams require VFX artists to visualize and implement elements, while meeting all the technical requirements. Artists, as a result, need to have sufficient technical knowledge to draw from. Technical knowledge means knowing how to do things like integrate particle simulations and optimize for performance. The methods for these change with technology, so artists also need to be able to keep up by adapting and researching new tools, engines or rendering methods.

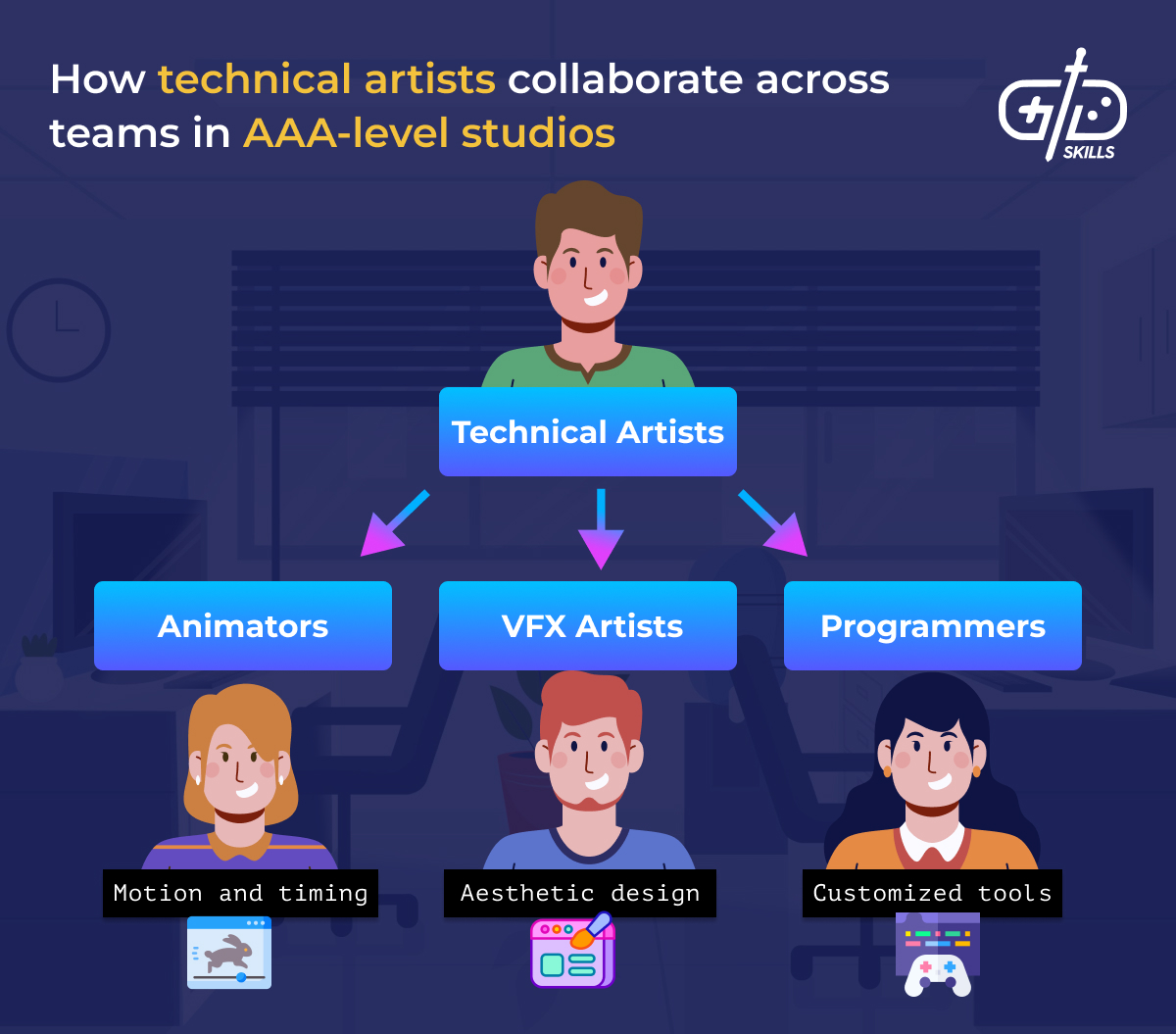

VFX artists in large, AAA-level studios collaborate with technical artists. Technical artists work across teams, such as by working with programmers to create tools that don’t strain game performance for artists to use. VFX artists don’t hybridize the same way, but focus on creating the effects while technical artists make sure the visuals, including the animations and UI, run without lag and look appealing.

VFX artists with different levels of experience have different roles. Entry-level artists are typically given junior roles in indie or mobile studios, whereas artists with more experience become either lead artists or supervisors in AAA studios and indie or mobile teams. There are devs and artists with higher levels of experience that have worked for indie studios as well, so it depends on multiple external variables, including where they prefer to work.

A VFX artist’s salary in the game industry also varies depending on their experience, location and studio size. The table below shows the general salary ranges for VFX artists in the US.

| Experience | Annual Pay | Hourly Pay | Roles |

|---|---|---|---|

| Entry-level artists with 1-3 years of experience | $66,000 – $71,000 | $33 – $35 | Junior roles in indie or mobile studios |

| Mid-level artists with 3-5 years of experience | $77,000 – $88,000 | $37 – $43 | Standard role in mid-size or AAA studios |

| Senior-level artists with 5-10 years of experience | $99,000 – $120,000 | $46 – $57 | Lead or principal artist in AAA studios |

| Artists with more than 10 years of experience | $132,000 – $152,000 | Over $51 | Supervisors, technical directors or studio veterans |

Freelancers earn about $40 – $80 per hour, depending on the project’s scope and their reputation. Artists who are skilled in real-time effects and optimization are in higher demand due to industry trends, so having knowledge of up-to-date software and tools is valuable.

Which games have the best VFX?

Games that have the best VFX are titles from the fantasy, action, fighting and RPG genres. Each genre relies heavily on fast-paced gameplay packed with movement and/or colorful visuals for character skills. It’s difficult to point out singular titles that have the best VFX, as every game carries its own level of rendering and design. The titles expanded on below are examples of games from the aforementioned genres that are known for their VFX.

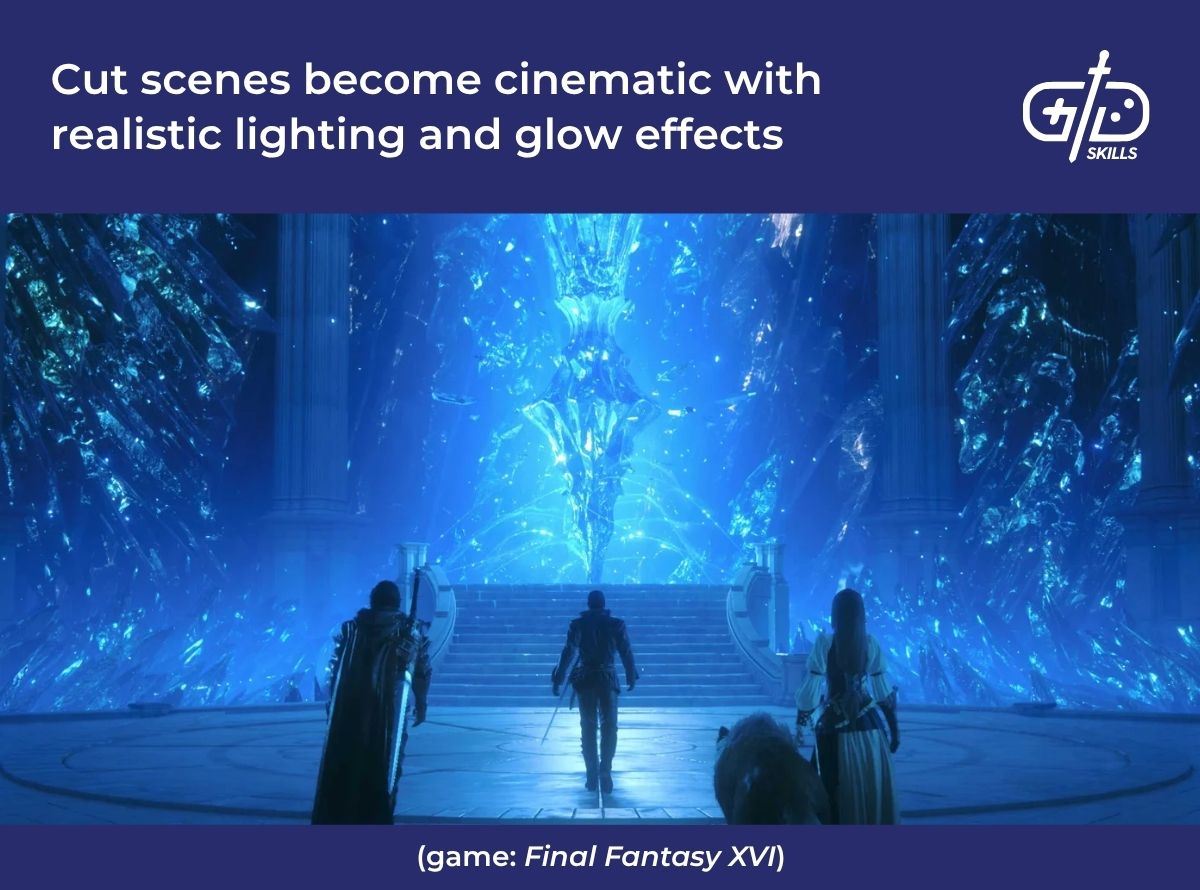

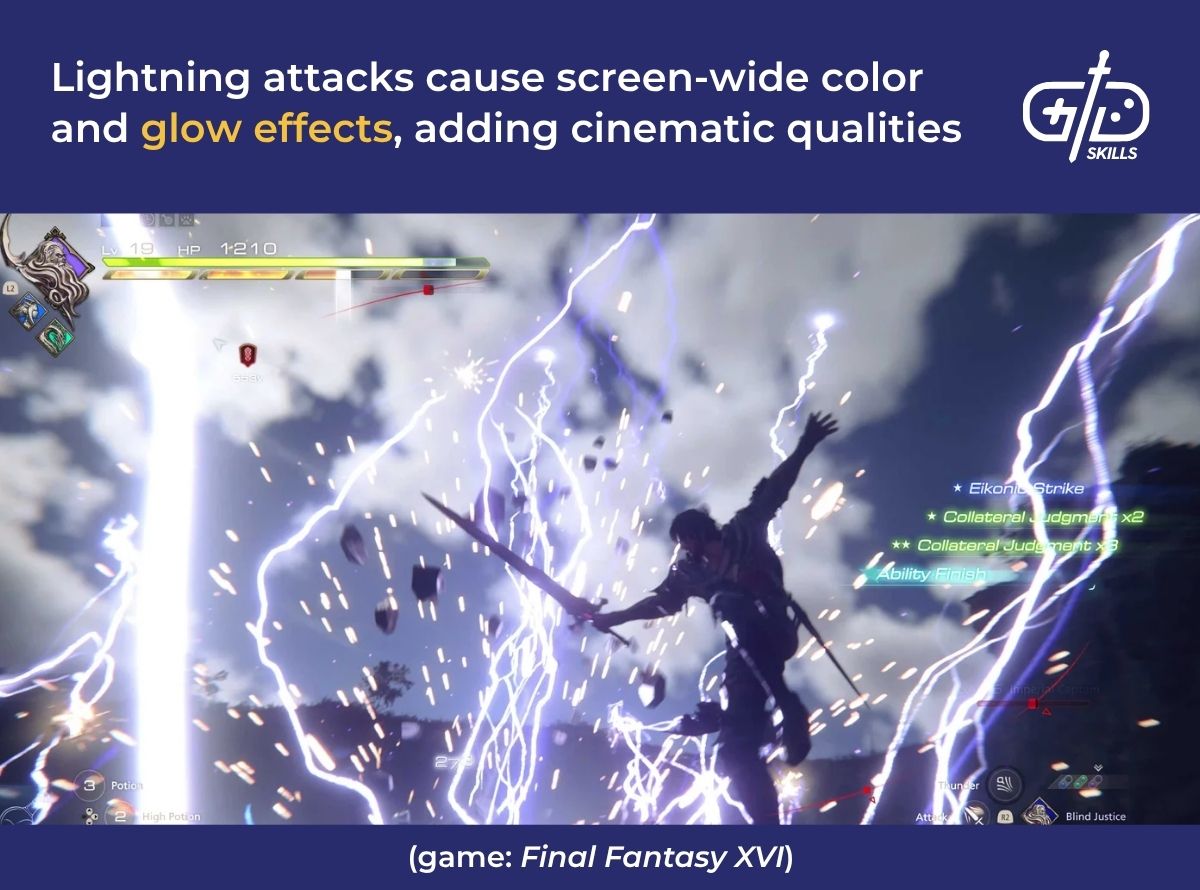

Final Fantasy XVI is a fantasy action RPG that’s known for using VFX for its spellcasting and elemental effects. The VFX stands out as it’s tied to the game’s lore and combat system, where every summon results in massive effects such as firestorms or lightning strikes. The impact of the move is apparent during combat, with wide areas of effect and splashes of color.

FFXVI uses real-time and particle VFX for Clive’s Phoenix abilities, which involve fiery wings and magic trails. Heat distortion and glow effects for the embers follow player progress and upgrade along with the gameplay. Evolving VFX makes players feel sure of their mastery, and is a clear sign of growth, motivating players to keep engaging.

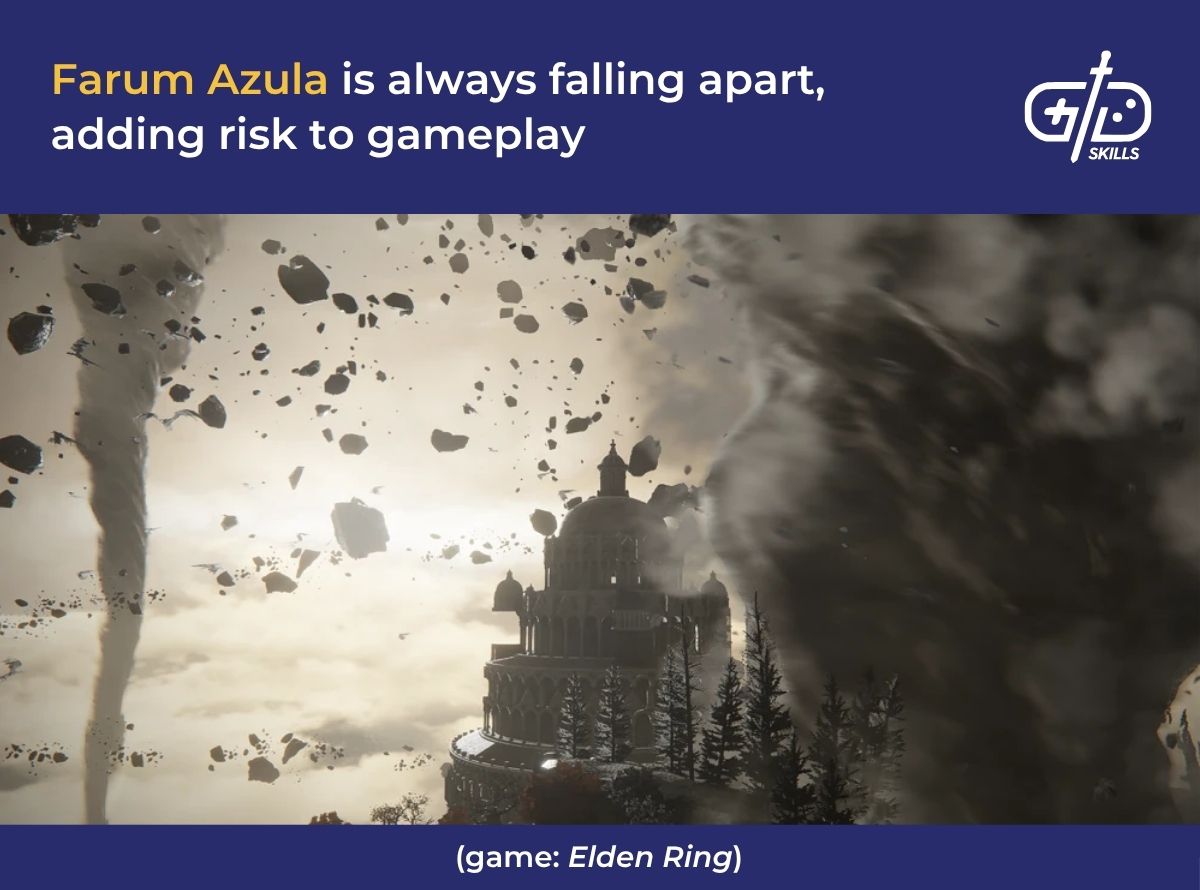

Elden Ring is another fantasy action RPG that makes use of VFX for environmental effects. The game’s open world is massive, so adding region-specific effects like fog and lighting highlights possible elements of lore and risk. A glowing door symbol appears to tell players there’s an overworld boss, which ties into the game’s fantasy elements. Magical aura effects around caves and entrances hint at hidden powers or relics, incentivizing exploration.

Elemental effects and shaders are apparent in Elden Ring’s boss arenas, which have fog walls with semi-transparent shaders to show players that danger waits beyond. Farum Azula’s arena comes with a sky full of swirling winds and flying debris that emphasize the risk and give combat an immersive feel.

God of War: Ragnarok is an action and fantasy game that blends gameplay with narrative via VFX. The game uses dynamic weather and elemental attacks that change based on the setting. Cinematic transitions connect fantasy scenes to one another with particle effects like fog or lighting.

Combat in God of War pays heed to lore, as the massive elemental effects connect to the characters’ mythical identities. Weapon trails and impact effects are a notable use of VFX. Kratos’ Leviathan Axe leaves a trail of ice and Atreus’ arrow shots trigger screen-wide lightning bursts that distort visibly, emphasizing the impact.

Street Fighter 6 is a fighting game with stylized visuals, blending 2D effects against a 3D environment. The 2D effects include stylized ink splashes for moves and hit sparks for successful attacks. Real-time motion effects ensure that the VFX stays in sync with the fast-paced gameplay, which builds up players’ adrenaline. Luke’s Sand Blast move fires a jet of compressed air at opponents, with exaggerated hit sparks.

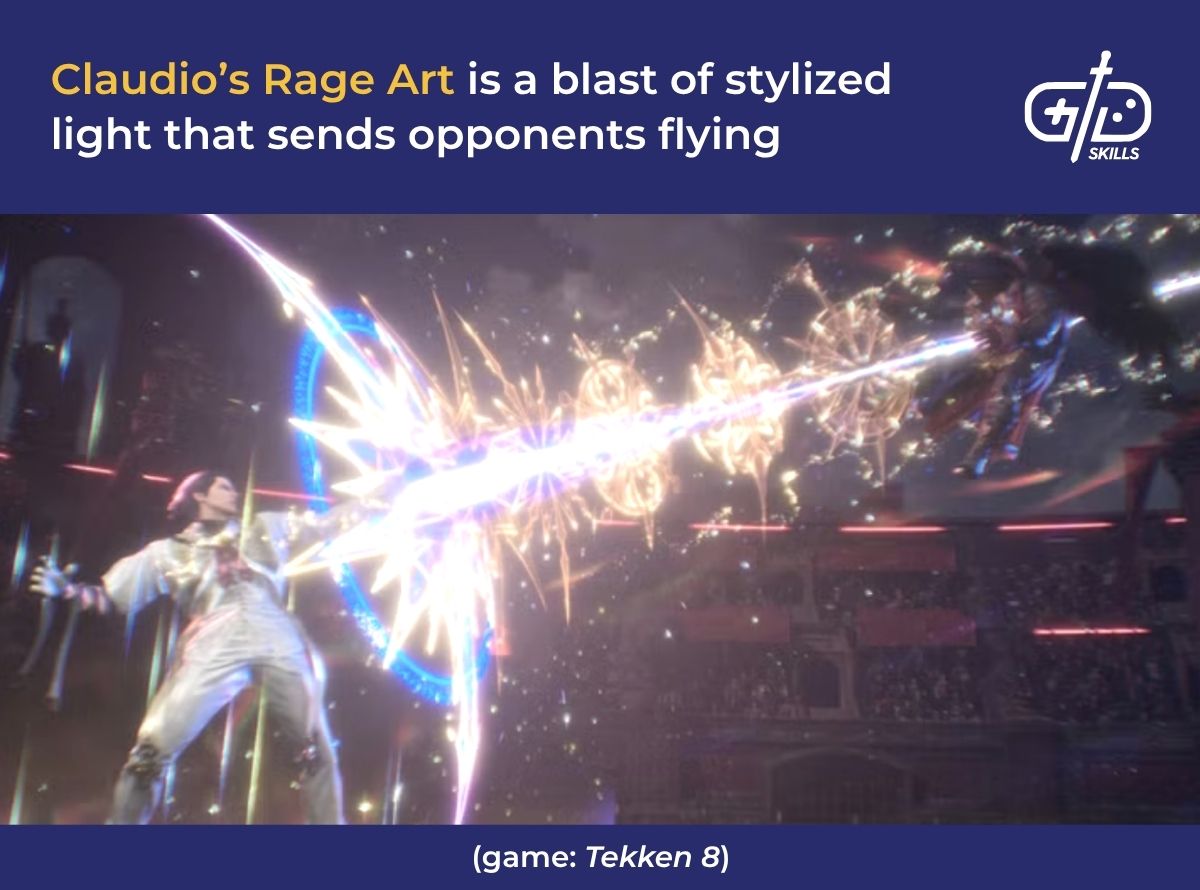

Tekken 8 is another fighting game that uses VFX for real-time lighting and physics effects to create a cinematic battle experience. Slow motion is used to highlight successful hits and showcase player wins, while real-time lighting creates in-depth movements. Tied together, the VFX make the game more immersive and engaging.

Tekken’s character-specific VFX is an additional plus, making every character seem important by emphasizing their unique traits and the varying range of fight styles. During Rage Arts, hero lighting and shaders work in tandem to spotlight fighters, and throwing in particle trails tells players when it’s safe to attack.

In fighting games like Tekken and Street Fighter, which prioritize fast-paced gameplay and stylized visuals, VFX is used to indicate successful hits and timing. The VFX to do this comes in the form of stylized splash effects and hit sparks. Clarity is front and center, since players have to be able to understand how to use moves to progress and win challenges. These genres stand out as they require the most VFX for gameplay, lorebuilding and combat.

What software is used for VFX in games?

Software used for VFX in games include Blender, Unreal Engine 5, and Houdini. Unity is suited for smaller studios and indie devs, with Adobe Photoshop being the go-to when creating customizable VFX assets. Blender and Adobe are used in tandem to create assets which are imported into engines like Unity and UE5 to turn them into effects. Houdini and UE5, or Unity, are used together in a similar way.

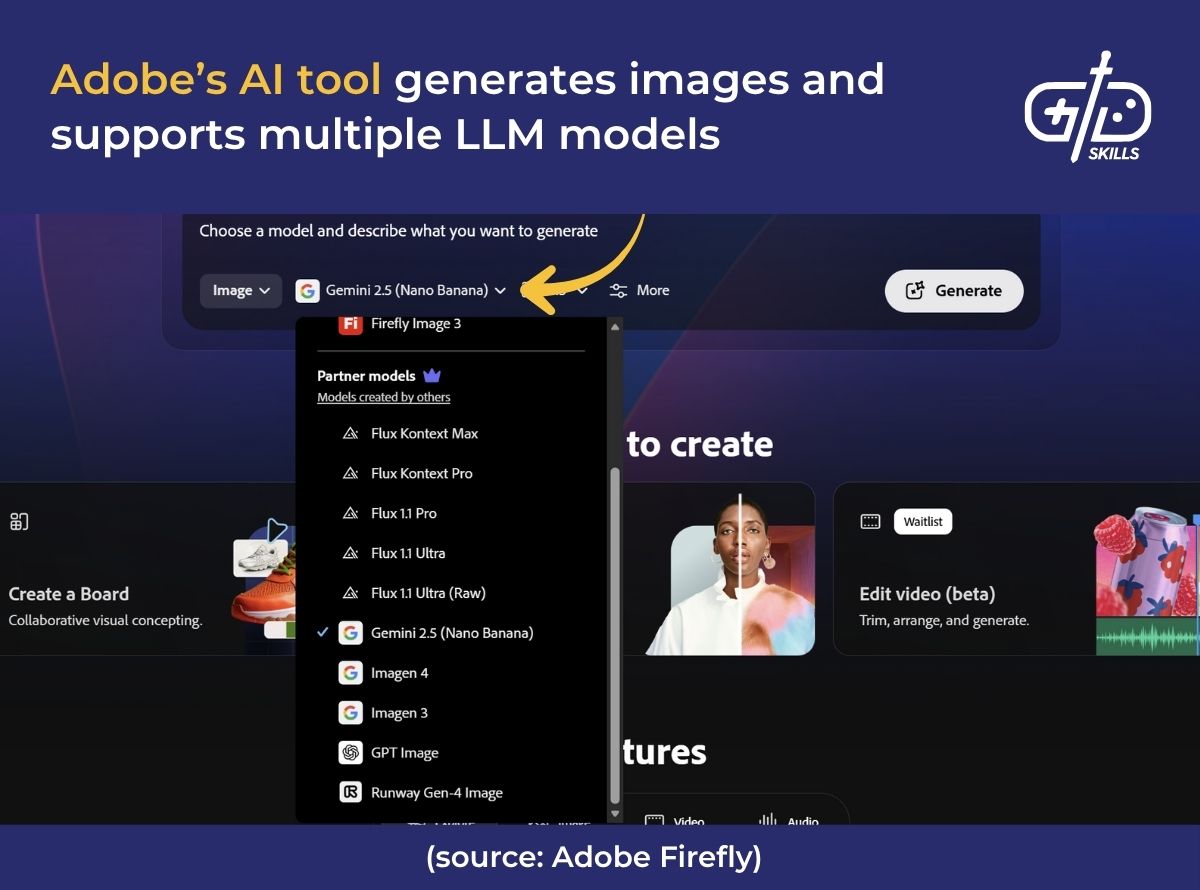

VFX artists use AI tools in certain cases, even though AI tools are comparatively new, with limited rapport across the industry. AI tools are useful for automated VFX asset generation, and particle systems. The tools also help align VFX effects with performance and player actions, so they’re efficient when put together with game engines.

Blender is used for 3D modeling, animation and simulation, with built-in options for asset creation. The software is free and open-source, letting artists customize freely but with little to no room for engine integration. Effects need to be shifted to engines like UE5 to run in the game. There’s a steep learning curve for VFX as well, so it’s not ideal for beginner artists.

UE5 is an advanced game engine that comes with a built-in VFX system for both particles and shaders. The graphics are AAA-level with the Niagara VFX system, plus it has tools for geometry (Nanite) and lighting (Lumen). UE5 doesn’t run well on low-end devices, so high-end hardware is necessary to produce VFX in high quality.

Houdini is asset creation software that artists use for crowd simulations, fluids or destructions. Houdini’s assets are exportable to engines and have built-in 3D support, so the program is known for creating cinematic assets. It’s technical and advanced, so using it requires a high level of knowledge.

Unity is a popular engine for indie and mobile games and is lightweight compared with UE5. Unity has VFX and shader graphs for real-time effects, but the graphs aren’t as developed as Niagara. The Unity asset store is a useful source for budgeted devs and beginners, with scene packs and drag-and-drop tools, but limited unique assets for stylization.

Adobe Photoshop is the industry-standard tool, used for 2D textures, concept art and UI VFX. Adobe gives artists full control over their assets and is supported across engines, but doesn’t support animation or 3D VFX. I use Adobe to create assets, then export them into Unity’s tools to create effects. This is the process I used to create a pinata explosion for Sort Express!

The pinata explosion effect involved revealing a hidden item when the pinata burst, with colorful papers scattering around. The game’s art style was chunky and soft, so 3D sprites were chosen to match and to make the explosion feel large. I designed multiple slightly bent 3D paper models to look close to actual pinata paper on Maya, then imported them into Unity’s particle system. The particles were lastly set to churn out these different paper pieces for the full scatter effect.

What is the best VFX course for games?

The best VFX course for games is Godot’s VFX for Games: Beginner to Intermediate on Skillshare, which is taught by Gabriel Aguiar. The course is tailored for indie devs and beginner VFX artists, with over four hours of video content and 70 downloadable lessons. Gabriel is a trustworthy source since he’s an experienced VFX dev with an existing base on YouTube.

During VFX learning and training, the main takeaway needs to be how to create VFX that’s emotionally engaging and clear. VFX that prioritizes performance optimization and narrative integration ties into those, as identified by Vicente and Bautista from Mapúa University in 2025. These essentials are covered in Godot’s courses, which is part of the reason it’s highly recommended.

Godot’s course covers both 2D and 3D VFX in Godot, including how to make real-time VFX and assets optimized for gameplay. The course touches on Godot’s particle system and texture and shader creation which is a useful knowledge base to start with. Scene-based VFX and script integration are also covered, which is ideal for devs working on lore heavy and RPG style games.

Gabriel teaches a course on Unity as well, which is available on Skillshare. Skillshare has 61 lessons, lasting six hours in total and covering different types of effects and the core principles of VFX. It’s a deep dive into creating stylized VFX, making the course ideal for mobile and indie devs, but it requires a Skillshare subscription for access.

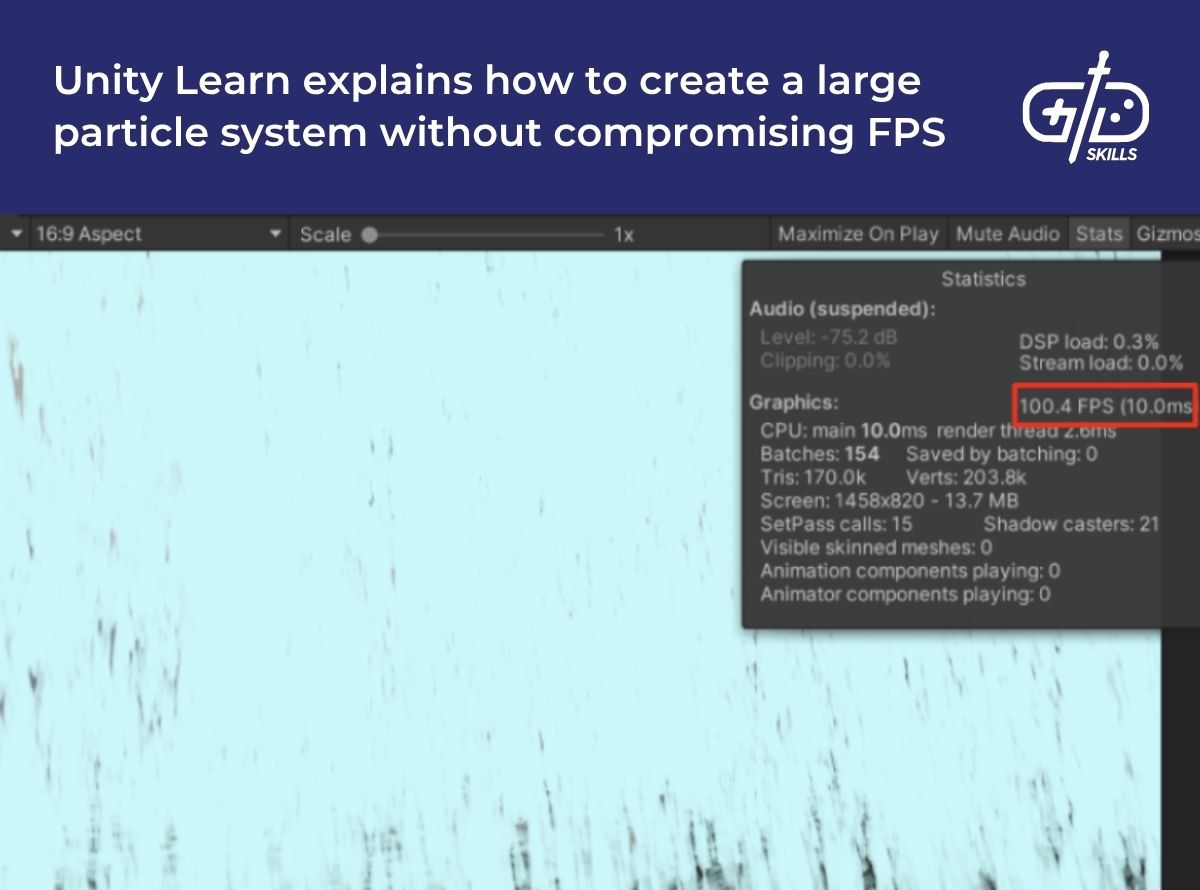

Unity has additional VFX training courses, including beginner-friendly and advanced options. Unity Learn’s Creative Core: VFX course covers basic VFX concepts using Unity’s particle system and VFX graph. The free lesson is two and a half hours long, with interactive exercises thrown in, but doesn’t offer a certification.

Unity’s advanced courses are available on other platforms like Udemy. Udemy’s course, Creating Realistic VFX in Unity, is geared toward intermediate and advanced VFX artists, with the lesson time going up to six hours. It’s not free, but it covers topics like particle emission, physics simulations, and Unity’s scripting language: C#.

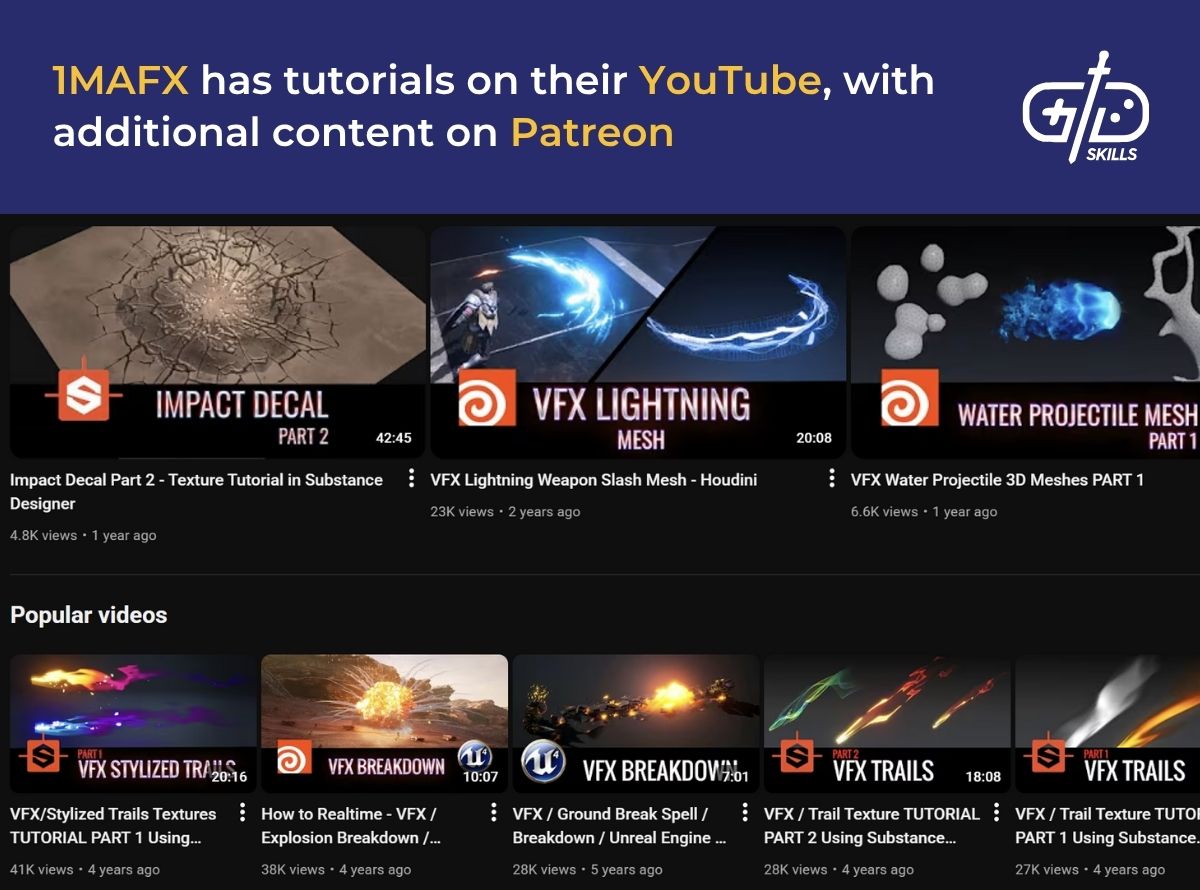

1MAFX has tutorials focusing on both Unity and Unreal, with a level-based learning system that makes it ideal for indie devs and hobbyists. The tutorials break down VFX game assets, particle systems and shaders.

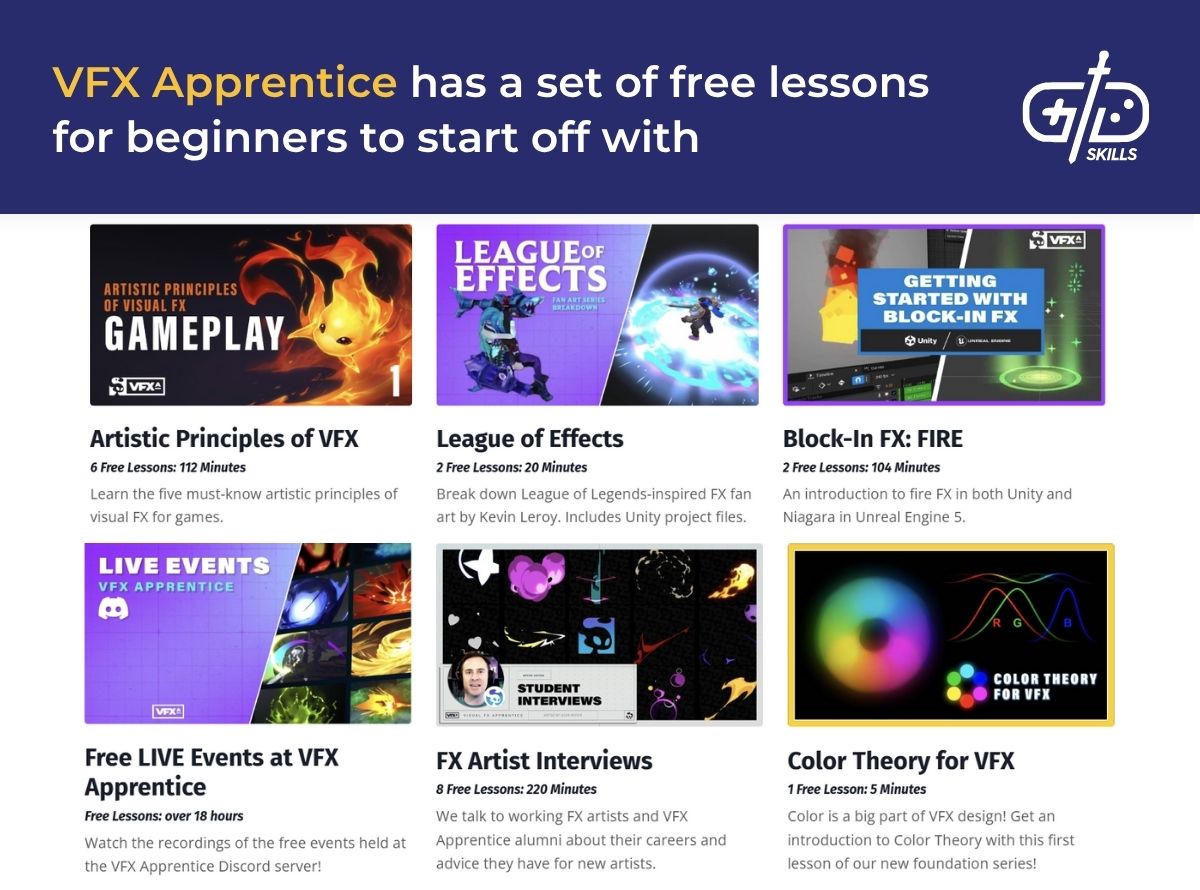

VFX Apprentice has courses that focus on VFX for games and animation using software like Houdini, EmberGen and LiquiGen. The eight-week bootcamp covers stylized 2D and 3D VFX alongside shader creation and real-time effects. It’s recommended for intermediate to advanced learners since the bootcamp delves into studio-level VFX. MoreVFXAcademy is another platform for advanced learners. There’s a full curriculum on AAA studio techniques, with a starting point for beginners.

DOUBLEJUMP Academy is another professional training program that gives learners practical training by simulating AAA production processes. The program teaches Niagara from Unreal and Houdini’s asset creation, as well as scene integration. The practical training also helps students develop production habits and learn how to collaborate across large teams to produce efficient results.